Protect Data In ChatGPT

Secure ChatGPT.

Safeguard AI Transformation.

Unleash the Power of AI. Securely.

Get visibility from data discovery and classification with Data Security Posture Management (DSPM)

Enforce DLP policies for ChatGPT and prevent data loss with Forcepoint Data Loss Prevention (DLP)

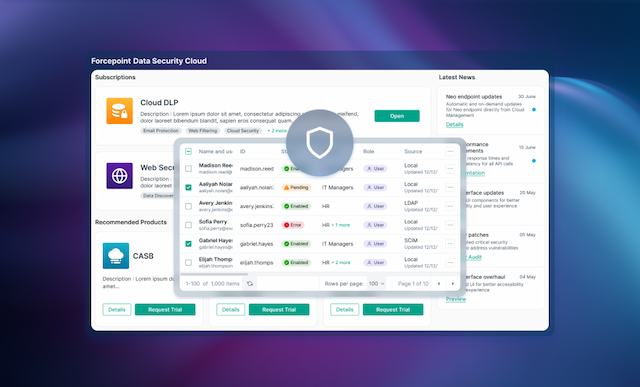

Secure access to cloud and web apps and get continuous control over data with Forcepoint Data Security Cloud

Prevent Data Loss with DLP for ChatGPT

Utilize 1,700+ out-of-the-box classifiers to stop data loss

Block copy and paste of sensitive information into web browsers and cloud apps

Unify policy management and enforce them everywhere users access data or use generative AI

Safeguard AI Transformation with ChatGPT Data Security

Forcepoint gives organizations comprehensive visibility and control over data and ChatGPT so you can maximize its benefits while minimizing risks.

Uncover Risk: Get total visibility over your sensitive data and its usage

Control Data: Stop users from sharing private information with ChatGPT

Prevent Misuse: Manage access and enforce security policies anywhere

Control Access: Via users, groups, device management and other criteria

Unleash the Power of AI. Securely.

Boost productivity with ChatGPT while ensuring sensitive data stays clear.

Know what data you have

Get visibility from data discovery and classification with Data Security Posture Management (DSPM).

Prevent exfiltration of sensitive data

Enforce DLP policies for ChatGPT and prevent data loss with Forcepoint Data Loss Prevention.

Unify policy management and data visibility

Secure access to cloud and web apps and get continuous control over data with Forcepoint Data Security Cloud.

Demonstrate regulatory compliance

Use proven DLP policies and automated reporting to stay compliance and speed audits.

Analyst recommended.

User approved.

Forcepoint data security solutions are consistently ranked among the top in the industry by analysts and customers alike.

Forcepoint has been named a Leader in the IDC MarketScape: Worldwide DLP 2025 Vendor Assessment.

Forcepoint named the 2024 Global DLP Company of the Year for the second consecutive year by Frost & Sullivan.

Forcepoint recognized as a Strong Performer in the Forrester Wave™: Data Security Platforms, Q1 2025.

Our Customer Stories

Our Customer Stories

"Forcepoint DLP has been great in protecting our data. It continually monitors data where in use, at rest or in motion. Ability to protect data from both external and internal factors makes it great."

Read Full ReviewEverything You Need to Know

About Data Security

ANALYST REPORT

Gartner®: Implement AI Security in the Generative AI Workflow

WEBCAST

AWARE 2026 : Advancing AI Security

EBOOK

5 Steps to Secure Generative AI

WEBCAST

Forcepoint Data Security Cloud: A Full On-Demand Demo