4 Ways Generative AI Will Impact CISOs and Their Teams: Gartner® Report

Recommendations include using DLP to protect proprietary data

0 min read

Forcepoint

Generative AI is a relatively new phenomenon with rapid and widespread adoption – and its impact on CISOs and their teams is still being understood.

In a recent report, 4 Ways Generative AI Will Impact CISOs and Their Teams, Gartner® examines the security ramifications of generative AI, with recommendations for how CISOs can proactively take steps to defend against new and expanded threats.

The impacts CISOs and security teams need to prepare from Generative AI mentioned in the report include: “Manage and monitor how the organization ‘consumes’ GenAI: ChatGPT was the first example; embedded GenAI assistants in existing applications will be the next. These applications all have unique security requirements that are not fulfilled by legacy security controls.”

Gartner on how can we control generative AI consumption

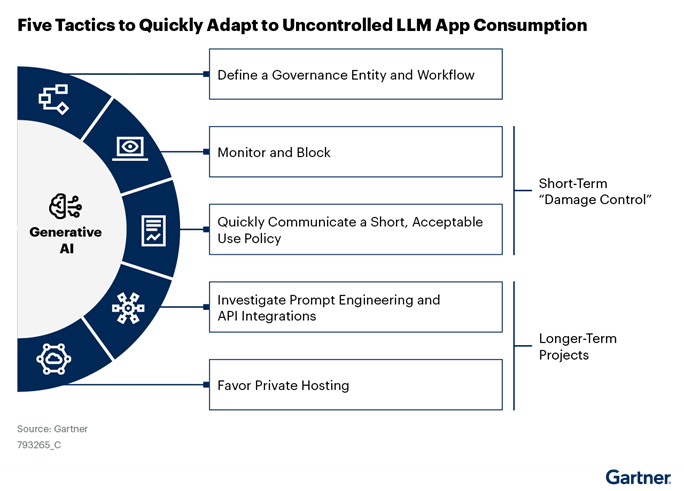

While user training and acceptable use policies are critical components of a robust security strategy for working with generative AI, relying on the diligence of individual employees is not a viable approach. It makes most sense to apply security solutions to prevent data exfiltration caused by careless employee use of generative AI applications. On this topic, Gartner lists “five tactics to gain better control of GenAI consumption,” which are: define a governance entity and workflow, monitor and block, quickly communicate a short acceptable use policy, investigate prompt engineering and API integrations, and favor private hosting.

Gartner on blocking known domains and applications to generative AI

Workers will always want to use productivity-enhancing tools and placing them off-limits may encourage employees to use shadow AI on unmanaged personal devices. Here is Gartner again on the topic:

“Security leaders must acknowledge that blocking known domains and applications is not a sustainable or comprehensive option. It will also ;trigger “user bypasses,” where employees would share corporate data with unmonitored personal devices in order to get access to the tools. Many organizations already shifted from blocking to an “approval” page with a link to the organization’s policy and a form to submit an access request.”

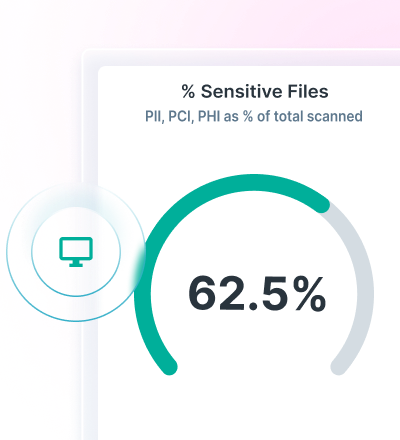

Instead of removing access to generative AI applications like ChatGPT for all employees, Forcepoint offers powerful Data Loss Prevention (DLP) capabilities delivered through the Forcepoint ONE platform allow organizations to reap the benefits of generative AI without losing control of sensitive data.

Forcepoint ONE handles the access piece of the equation, in addition to monitoring web traffic between users and those generative apps, including image tools like DALL-E as an example. The hyperscaler-based Forcepoint ONE platform also provides centralized policy enforcement, enabling security teams to curate secure access to thousands of generative AI applications.

CISOs can use a single policy to manage Forcepoint Data Security Solutions across all channels, and Forcepoint ONE offers unified reporting with a single-pane-of-glass view of enterprise data security. This makes it simple to set and enforce policies for generative AI use that maximize the technology’s potential and keep employees happy without exposing organizations to risk of data leakage.

###

Source: Gartner, 4 Ways Generative AI Will Impact CISOs and Their Teams, By Jeremy D'Hoinne, Avivah Litan, Peter Firstbrook, 29 June 2023

GARTNER is a registered trademark and service mark of Gartner, Inc. and/or its affiliates in the U.S. and internationally and is used herein with permission. All rights reserved.

Gartner does not endorse any vendor, product or service depicted in its research publications, and does not advise technology users to select only those vendors with the highest ratings or other designation. Gartner research publications consist of the opinions of Gartner’s research organization and should not be construed as statements of fact. Gartner disclaims all warranties, expressed or implied, with respect to this research, including any warranties of merchantability or fitness for a particular purpose.

Forcepoint

Read more articles by ForcepointForcepoint-authored blog posts are based on discussions with customers and additional research by our content teams.

- Want to learn more about how generative AI will impact CISOs and their teams? Read the full Gartner report for more insights.

In the Article

- Want to learn more about how generative AI will impact CISOs and their teams? Read the full Gartner report for more insights.Read Report

X-Labs

Get insight, analysis & news straight to your inbox

To the Point

Cybersecurity

A Podcast covering latest trends and topics in the world of cybersecurity

Listen Now