EMPFOHLENE PRODUKTE

PRODUKTINTEGRATIONEN

IN AKTION ERLEBEN

So schützen Sie Ihre Daten überall

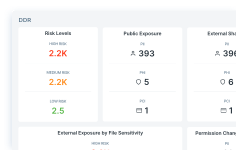

Erleben Sie unsere Data Detection & Response (DDR) Software in Aktion

IMMER NOCH UNSICHER?

Mit einem Experten sprechenKostenlose Datenrisikobewertung anfordernUNSERE PLATTFORM

PROBLEME, DIE WIR LÖSEN

BRANCHEN, DIE WIR BETREUEN

KUNDENBERICHTE

Warum der Finanzsektor das primäre Ziel von KI-Angriffen ist

Genauigkeit und transparente Berichterstellung

IMMER NOCH UNSICHER?

Kostenlose Datenrisikobewertung anfordernMit einem Experten sprechenLEITFADEN

Der praktische Leitfaden für Geschäftsführer zum Verhindern von Datenverlust

Entdecken Sie den Leitfaden

LEITFADEN

Forcepoint AI Mesh

AI Mesh kennenlernen

ANALYSTENBERICHT

Gartner: 2025 Market Guide for Data Security Posture Management

Bericht lesen

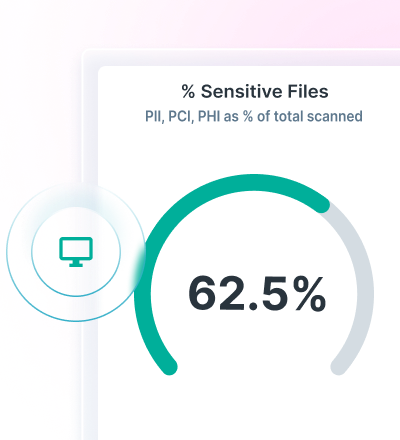

RISK ASSESSMENT

Kostenlose Datenrisikobewertung anfordern

Machen Sie den Anfang

WARUM FORCEPOINT

VERGLEICHEN SIE